Military planners spent the first two days of the Association of the United States Army’s annual meeting outlining the future of artificial intelligence for the service and tracing back from this imagined future to the needs of the present.

This is a world where AI is so seamless and ubiquitous that it factors into everything from rifle sights to logistical management. It is a future where every soldier is a node covered in sensors, and every access point to that network is under constant threat by enemies moving invisibly through the very parts of the electromagnetic spectrum that make networks possible. It is a future where weapons can, on their own, interpret the world, position themselves within it, plot a course of action, and then, in the most extreme situations, follow through.

It is a world of rich battlefield data, hyperfast machines and vulnerable humans. And it is discussed as an inevitability.

“We need AI for the speed at which we believe we will fight future wars,” said Brig. Gen. Matthew Easley, director of the Army AI Task Force. Easley is one of a handful of people with an outsized role shaping how militaries adopt AI.

The past of data future

Before the Army can build the AI it needs, the service needs to collect the data that will fuel and train its machines. In the shortest terms, that means the task force’s first areas of focus will include preventative maintenance and talent management, where the Army is gathering a wealth of data. Processing what is already collected has the potential for an outsized impact on the logistics and business side of administering the Army.

For AI to matter in combat, the Army will need to build a database of what sensor-readable events happen in battle, and then refine that data to ultimately provide useful information to soldiers. And to get there means turning every member of the infantry into a sensor.

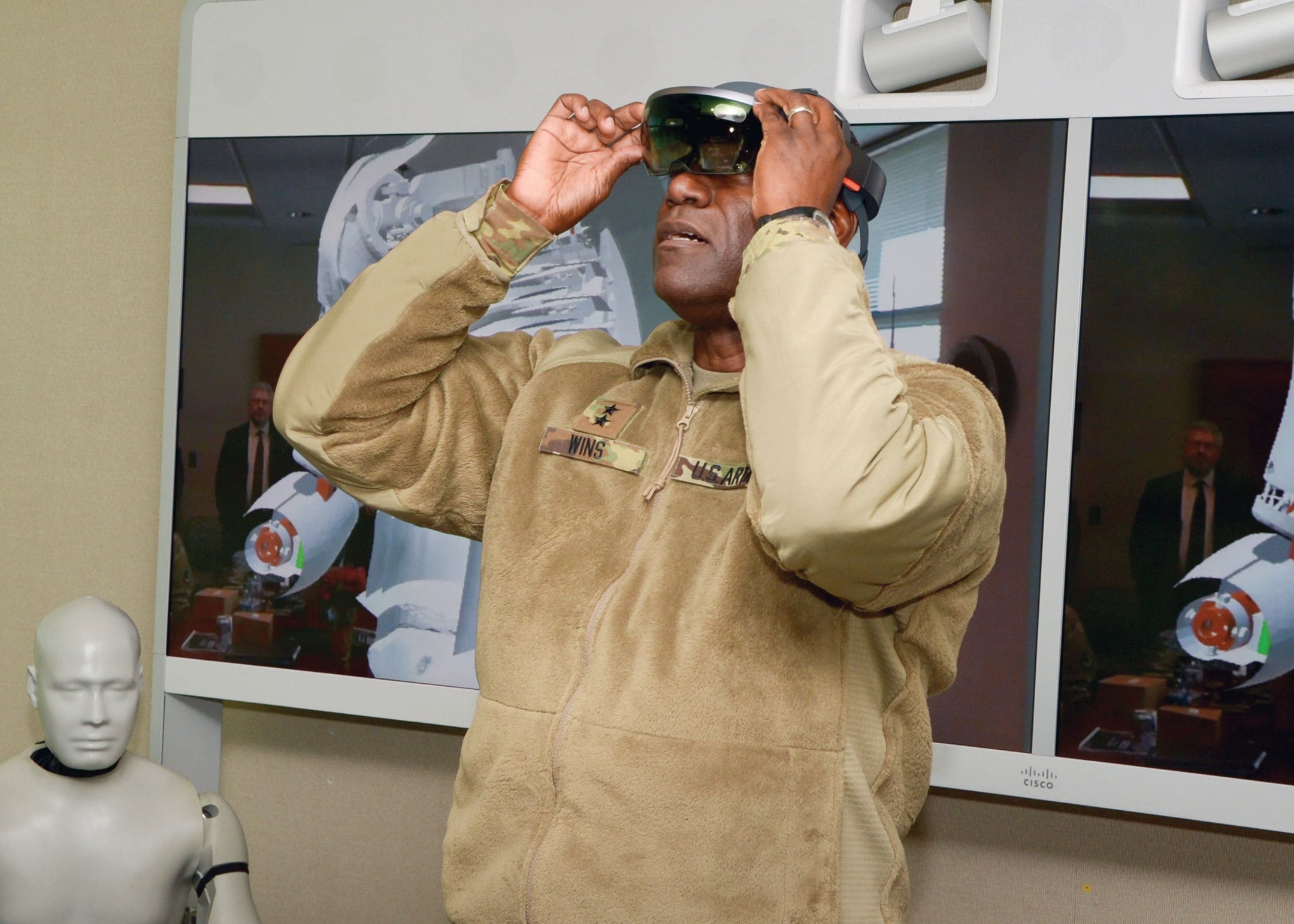

“Soldier lethality is fielding the Integrated Visual Augmentation Systems, or our IVAS soldier goggles that each of our infantry soldiers will be wearing,” Easley said. “In the short term, we are looking at fielding nearly 200,000 of these systems.”

The IVAS is built on top of Microsoft’s HoloLens augmented reality tool. That the equipment has been explicitly tied to not just military use, but military use in combat, led to protests from workers at Microsoft who objected to the product of their labor being used with “intent to harm.”

And with IVAS in place, Easley imagines a scenario where IVAS sensors plot fields of fire for every soldier in a squad, up through a platoon and beyond.

“By the time it gets to [a] battalion commander,” Easley said, “they’re able to say where their dead zones are in front of [the] defensive line. They’ll know what their soldiers can touch right now, and they’ll know what they can’t touch right now.”

Easley compared the overall effect to the data collection done by commercial companies through the sensors on smartphones — devices that build detailed pictures of the individuals carrying them.

Fitting sensors to infantry, vehicles or drones can help build the data the Army needs to power AI. Another path involves creating synthetic data. While the Army has largely fought the same type of enemy for the past 18 years, preparing for the future means designing systems that can handle the full range of vehicles and weapons of a professional military.

With insurgents unlikely to field tanks or attack helicopters at scale anytime soon, the Army may need to generate synthetic data to train an AI to fight a near-peer adversary.

Faster, stronger, better, more autonomous

“I want to proof the threat,” said Bruce Jette, the Army’s assistant secretary for acquisition, logistics and technology, while speaking at a C4ISRNET event on artificial intelligence at AUSA. Jette then set out the kind of capability he wants AI to provide, starting from the perspective of a tank turret.

“Flip the switch on, it hunts for targets, it finds targets, it classifies targets. That’s a Volkswagen, that’s a BTR [Russian-origin armored personnel carrier], that’s a BMP [Russian-origin infantry fighting vehicle]. It determines whether a target is a threat or not. The Volkswagen’s not a threat, the BTR is probably a threat, the BMP is a threat, and it prioritizes them. BMP is probably more dangerous than the BTR. And then it classifies which one’s [an] imminent threat, one’s pointing towards you, one’s driving away, those type of things, and then it does a firing solution to the target, which one’s going to fire first, then it has all the firing solutions and shoots it.”

Enter Jette’s ideal end state for AI: an armed machine that senses the world around it, interprets that data, plots a course of action and then fires a weapon. It is the observe–orient–decide–act cycle without a human in the loop, and Jette was explicit on that point.

“Did you hear me anywhere in there say ‘man in the loop?,’ ” Jette said. “Of course, I have people throwing their hands up about ‘Terminator,’ I did this for a reason. If you break it into little pieces and then try to assemble it, there’ll be 1,000 interface problems. I tell you to do it once through, and then I put the interface in for any safety concerns we want. It’s much more fluid.”

In Jette’s end state, the AI of the vehicle is designed to be fully lethal and autonomous, and then the safety features are added in later — a precautionary stop, a deliberate calming intrusion into an already complete system.

Jette was light on the details of how to get from the present to the thinking tanks of tomorrow’s wars. But it is a process that will, by necessity, involve buy-in and collaboration with industry to deliver the tools, whether it comes as a gestalt whole or in a thousand little pieces.

Learning machines, fighting machines

Autonomous kill decisions, with or without humans in the loop, are a matter of still-debated international legal and ethical concern. That likely means that Jette’s thought experiment tank is part of a more distant future than a host of other weapons. The existence of small and cheap battlefield robots, however, means that we are likely to see AI used against drones in the more immediate future.

Before robots fight people, robots will fight robots. Before that, AI will mostly manage spreadsheets and maintenance requests.

“There are systems now that can take down a UAS pretty quickly with little collateral damage,” Easley said. “I can imagine those systems becoming much more autonomous in the short term than many of our other systems.”

Autonomous systems designed to counter other fast, autonomous systems without people on board are already in place. The aptly named Counter Rocket, Artillery, and Mortar, or C-RAM, systems use autonomous sensing and reaction to specifically destroy projectiles pointed at humans. Likewise, autonomy exists on the battlefield in systems like loitering munitions designed to search for and then destroy anti-air radar defense systems.

Iterating AI will mean finding a new space of what is acceptable risk for machines sent into combat.

“From a testing and evaluation perspective, we want a risk knob. I want the commander to be able to go maximum risk, minimum risk,” said Brian Sadler, a senior research scientist at the Army Research Laboratory. “When he’s willing to take that risk, that’s OK. He knows his current rules of engagement, he knows where he’s operating, he knows if he uses some platforms; he’s willing to make that sacrifice.

In his work at the Vehicle Technology Directorate of the Army Combat Capabilities Development Command, Sadler is tasked with catching up the science of AI to the engineered reality of it. It is not enough to get AI to work; it has to be understood.

“If people don’t trust AI, people won’t use it,” Tim Barton, chief technology officer at Leidos, said at the C4ISRNET event.

Building that trust is an effort that industry and the Army have to tackle from multiple angles. Part of it involves iterating the design of AI tools with the people in the field who will use them so that the information analyzed and the product produced has immediate value.

“AI should be introduced to soldiers as an augmentation system,” said Lt. Col. Chris Lowrance, a project manager in the Army’s AI Task Force. “The system needs to enhance capability and reduce cognitive load.”

Away from but adjacent to the battlefield, Sadler pointed to tools that can provide immediate value even as they’re iterated upon.

“If it’s not a safety of life mission, I can interact with that analyst continuously over time in some kind of spiral development cycle for that product, which I can slowly whittle down to something better and better, and even in the get-go we’re helping the analyst quite a bit,” Sadler said.

“I think Project Maven is the poster child for this,” he added, referring to the Google-started tool that identifies objects from drone footage.

Project Maven is the rare intelligence tool that found its way into the public consciousness. It was built on top of open-source tools, and workers at Google circulated a petition objecting to the role of their labor in creating something that could “lead to potentially lethal outcomes.” The worker protest led the Silicon Valley giant to outline new principles for its own use of AI.

Ultimately, the experience of engineering AI is vastly different than the end user, where AI fades seamlessly into the background, becoming just an ambient part of modern life.

If the future plays out as described, AI will move from a hyped feature, to a normal component of software, to an invisible processor that runs all the time.

“Once we succeed in AI,” said Danielle Tarraf, a senior information scientist at the think tank Rand, “it will become invisible like control systems, noticed only in failure.”

Kelsey Atherton blogs about military technology for C4ISRNET, Fifth Domain, Defense News, and Military Times. He previously wrote for Popular Science, and also created, solicited, and edited content for a group blog on political science fiction and international security.