Humans learn by doing. The shared experience of hardship, enduring and overcoming is what bonds disparate recruits into functional teams, who over time learn each other’s weaknesses and strengths and, ideally at least, then adapt to best use each other. As more robots move onto the battlefield, DARPA wants those machines to work together, learn from each other to do better and move away from actions which cause regret. To spark research into this area, the Pentagon’s blue sky projects wing launched “CREATE,” or “Context Reasoning for Autonomous Teaming.”

The Artificial Intelligence Exploration Opportunity, announced Sept. 3, looks for research into how a group of small and disparate uncrewed vehicles could work together autonomously. Phase 1 requires feasibility studies, and Phase 2 is refining AI teaming techniques and algorithms from Phase 1 to work on vehicles with existing hardware, in simulation or on the actual hardware.

In much the same way that a group of people make decisions together on the fly, the solicitation notes that “local decision making is less informed and suboptimal but is infinitely scalable, naturally applicable to heterogeneous teams, and fast.”

For robots that have to work together in battle, those last traits are especially important, as they allow independent autonomous action, “thus breaking the reliance on centralized C2 and the need for pre-planned cost function definition.”

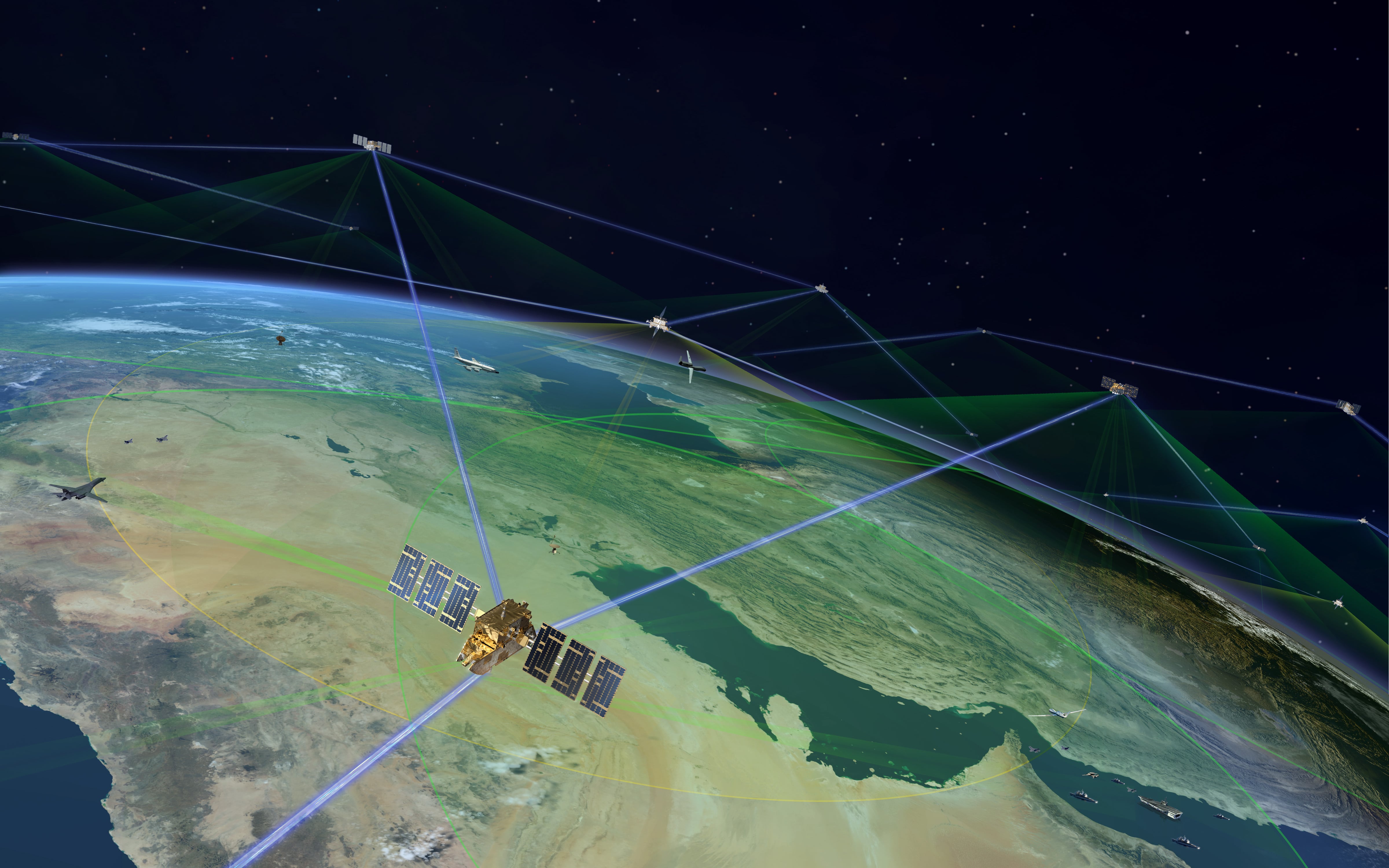

This is a step beyond the remotely directed and controlled systems of today, which use extensive communications networks to give humans fine-tuned controls over how machines move. Should those networks break down, machines that can move toward objectives on their own is a goal, even if those moves are less efficient or effective than the choices a human operator would have made. Advances in electronic warfare, combined with fears about the the loss of communication networks, both terrestrial and in orbit, are part of what’s driving military research and investment in autonomous machines.

What sets CREATE apart from, say, swarming systems of quadcopters, is that DARPA wants to find a framework that can communicate with a heterogeneous group of machines: likely quadcopters and unmanned ground vehicles too, different kinds of flying and swimming robots. In other words, a whole mechanical menagerie working to a similar purpose. With the right AI tool, the machine-machine team should be able to discern the context of where they are, what is happening, and then act independently. In addition, they can meet multiple spontaneous goals that arise over the course of a mission.

Getting to that point means a system that can learn and, especially, a system that can learn from mistakes.

“Agents within the team will have mechanisms for regulation to ensure (favorable) emergent behavior of the team to (1) better ensure the desired mission outcome and (2) bound the cost of unintended adverse action or ‘regret,’” reads the solicitation.

While “regret” can carry a whole lot of subtext when it comes to actions in war, it’s worth tempering that with the way regret is used as a standard term to describe a particular mechanism in reinforcement learning. While some learning methods seek to focus entirely on maximizing reward, designing a military machine to avoid wrong actions can be especially important.

“Fully autonomous agents have the potential to take undesirable action,” reads the solicitation. “While ‘hard-coded’ safeguards may be one possible solution, it is unlikely that commanders will anticipate all safeguards required for all possible eventualities for a team while maintaining an interesting level of autonomy.”

For the machines to work as independently and autonomously as desired, the AI guiding the robots robots will have to seek to meet a reward condition while making avoiding negative rewards, or regrets. Some safeguards might want to be hard-coded, with others derived from context.

Regret, too, could mean suboptimal execution of a mission. DARPA offers this example: consider a mixed team of robots gathering surveillance information over an area inefficiently, and then processing better ways to do in the future. What other behaviors the robots will need to learn to avoid will likely come later in the process, and can vary from task to task.

CREATE is the possible start of an interoperable AI tool. The end result, at least as envisioned at the start, is a way for many machines to work together to perform the missions humans task them with, and to do it independently, improvising as circumstances require.

It is a way to make machines a little more human, to make command a little more mission, and to make autonomy a little more useful.

Kelsey Atherton blogs about military technology for C4ISRNET, Fifth Domain, Defense News, and Military Times. He previously wrote for Popular Science, and also created, solicited, and edited content for a group blog on political science fiction and international security.