Success in cybersecurity is defined in negative space. It is the absence of visible problems, the professionals toiling away against threats and vulnerabilities, their hard work enabling smooth and seamless operation elsewhere. Except, as it turns out, a field based on thwarting problems before they become live concerns makes it hard to actually stop the problems. In a panel on “Measuring Cybersecurity Effectiveness in a Threat-Based World” at the 2019 RSA cybersecurity conference, practitioners from across the intelligence and government communities talked about how affirmative metrics led to better security.

“If you put things in simple easy explainable ways, it’s easy to get traction with senior-level folks,” said Marianne Bailey, deputy national manager for national security systems and a senior cybersecurity executive at the National Security Agency. Besides her work at the NSA, Bailey was involved in a process to roll out clear metrics for cybersecurity across the Department of Defense. To get that done, the metrics had to be clear enough that top brass could understand them, brag about them, and then roast each other until they improved. Roasting?

“In early session, one service had a bad score, and that person had stars on his shoulder, felt beat up, didn’t feel he had the ability to change it,” said Bailey. After getting the new metrics, that same senior-level person had tools and data and knew what to do to improve things. He was “able to brag about actions he took, things that he shut down.”

Interservice rivalry isn’t the most straightforward path to improving, say, the patch rate of computers in the hands of sailors or privates, but having the metrics in hand meant it was a clear way for the chain of command to understand the task at hand. Prompted by the actual security needs, and perhaps a bit of interservice rivalry, commanders could then clearly direct changes, see them implemented, and have new scores to show off the next time they face their peers.

Getting everyone on the same page takes a lot of work. The DoDCAR standard (adopted after an acronym that spelled out NASCAR was rejected) creates shared terminology, standards, definitions and metrics. Not only has having DoDCAR shifted behaviors within the Department, it’s shifted acquisitions, too.

“We were about to amass millions in midpoint solutions,” said Bailey. DoDCAR “made a course correction, by showing that we weren’t gonna get a lot out of that.”

Clear metrics not only allow for easy assessment of practices and risks, they allow for regular reassessment of how well security protocols are being followed.

“One of the key things is what gets measured gets done,” said Kevin Cox, program manager at the Cybersecurity and Infrastructure Security Agency.

“Incentivizing people to take action that results in nothing visible is a hard sell. This is a way to provide something visible,” said Matthew Scholl, chief of the computer security division at NIST. He then referenced his time in the Army, quipping “Joe does what gets inspected.”

Present compliance is not necessarily tailored to threat or business need, but if it’s rolled up into dashboards, it can provide a compliance view that is linked to the actual threats. It becomes actionable information and, therefore, inspectable. This matters at the 99 non-military federal agencies that Cox works with, and it matters at the Pentagon.

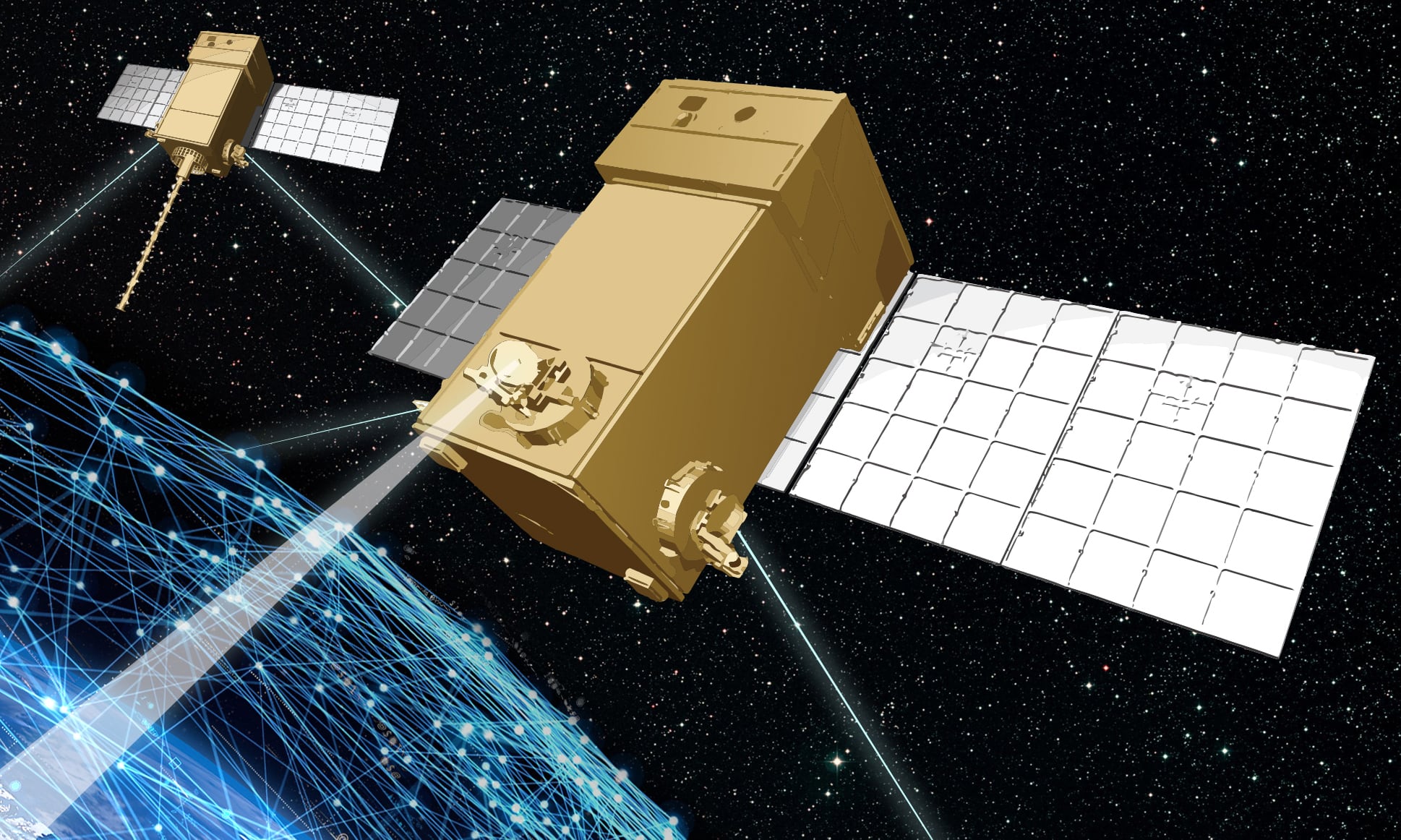

“Look at weapons today, they’re very connected,” said Bailey. “People don’t think of them as IT systems we need to be worried about.”

But they are. Creating clear metrics, a shared language and taxonomy around how to manage cyber risk and cybersecurity compliance, and then giving commanders the tools they need to actually regularly assess compliance means making sure that cybersecurity risks don’t turn IT problems with weapons into kinetic problems with weapons.

Kelsey Atherton blogs about military technology for C4ISRNET, Fifth Domain, Defense News, and Military Times. He previously wrote for Popular Science, and also created, solicited, and edited content for a group blog on political science fiction and international security.