A decision is only as good as the data used to make it.

When a slight delay can result in significant consequences, milliseconds matter. Lags or inaccuracies in data processing and communication can lead to missed opportunities, compromised missions, and increased risks to personnel.

A database needs to be as ready as the personnel using it because the infrastructure is the foundation of the data delivery process — ingesting, processing, and spitting out massive amounts of data in the blink of an eye. Databases need to feed defense teams data rapidly and precisely in critical situations at all times. Here are some capabilities to evaluate when determining your database’s readiness for mission-critical demands.

Massive ingestion at scale

In the vast landscape of defense data, information streams in from a myriad of global sources. With potential sources reaching up to 150,000 and the need for simultaneous data capture occurring tens of millions of times per second, the requirement for a database with robust real-time ingestion capabilities is non-negotiable. The challenge, however, lies in the scalability of storage solutions. Many databases struggle to accommodate the exponential growth of data without compromising performance or escalating costs. This often leads defense agencies to face increased expenses as their data management needs expand.

Databases engineered with scalability at their core maintain their performance levels even as data demands intensify, ensuring that critical operations can rely on uninterrupted, real-time insights at all times. Therefore, understanding the scalability limits and performance capabilities of a database system is crucial for defense organizations aiming to maintain operational efficiency and strategic advantage in an ever-evolving global data landscape.

High-speed data processing

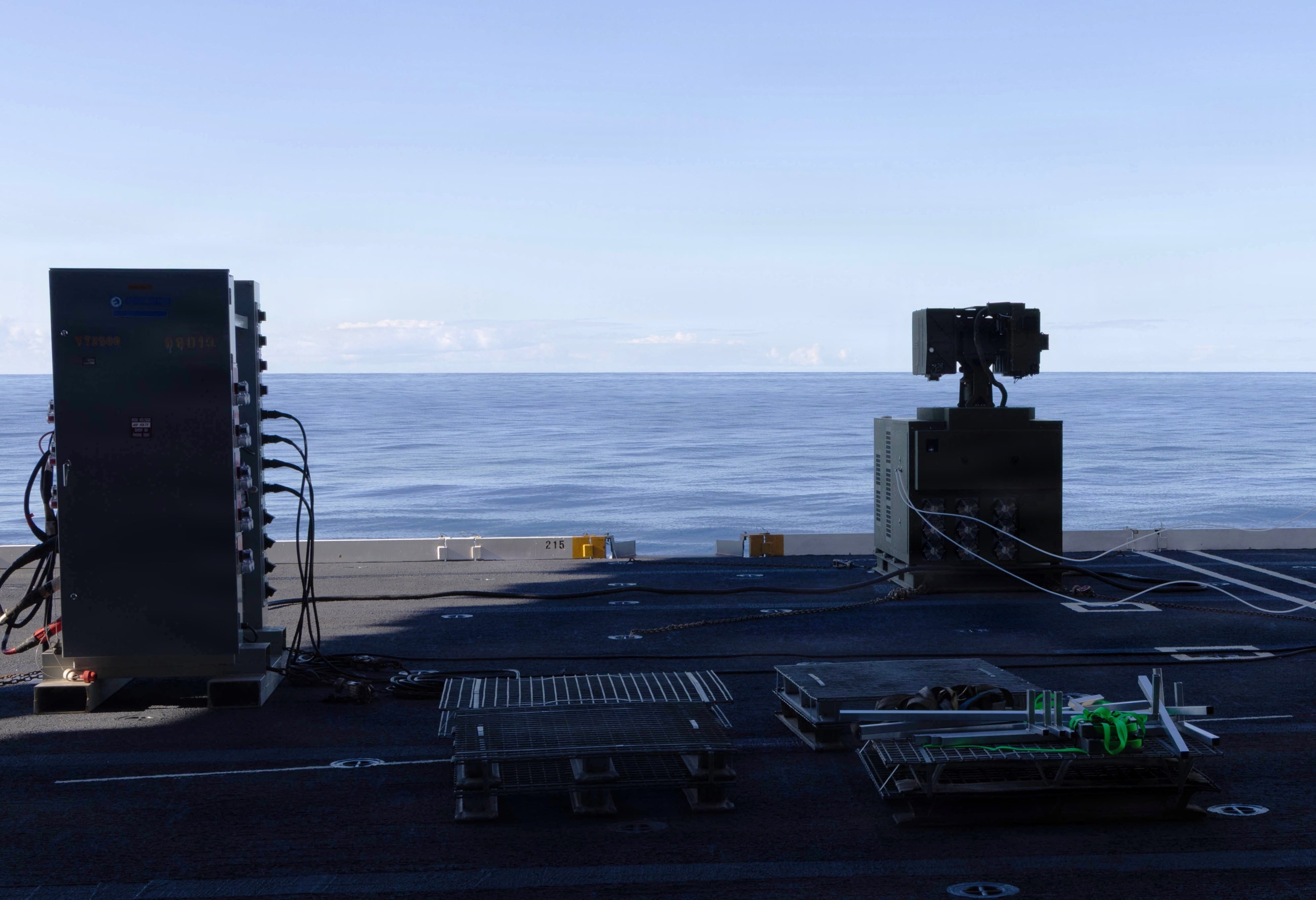

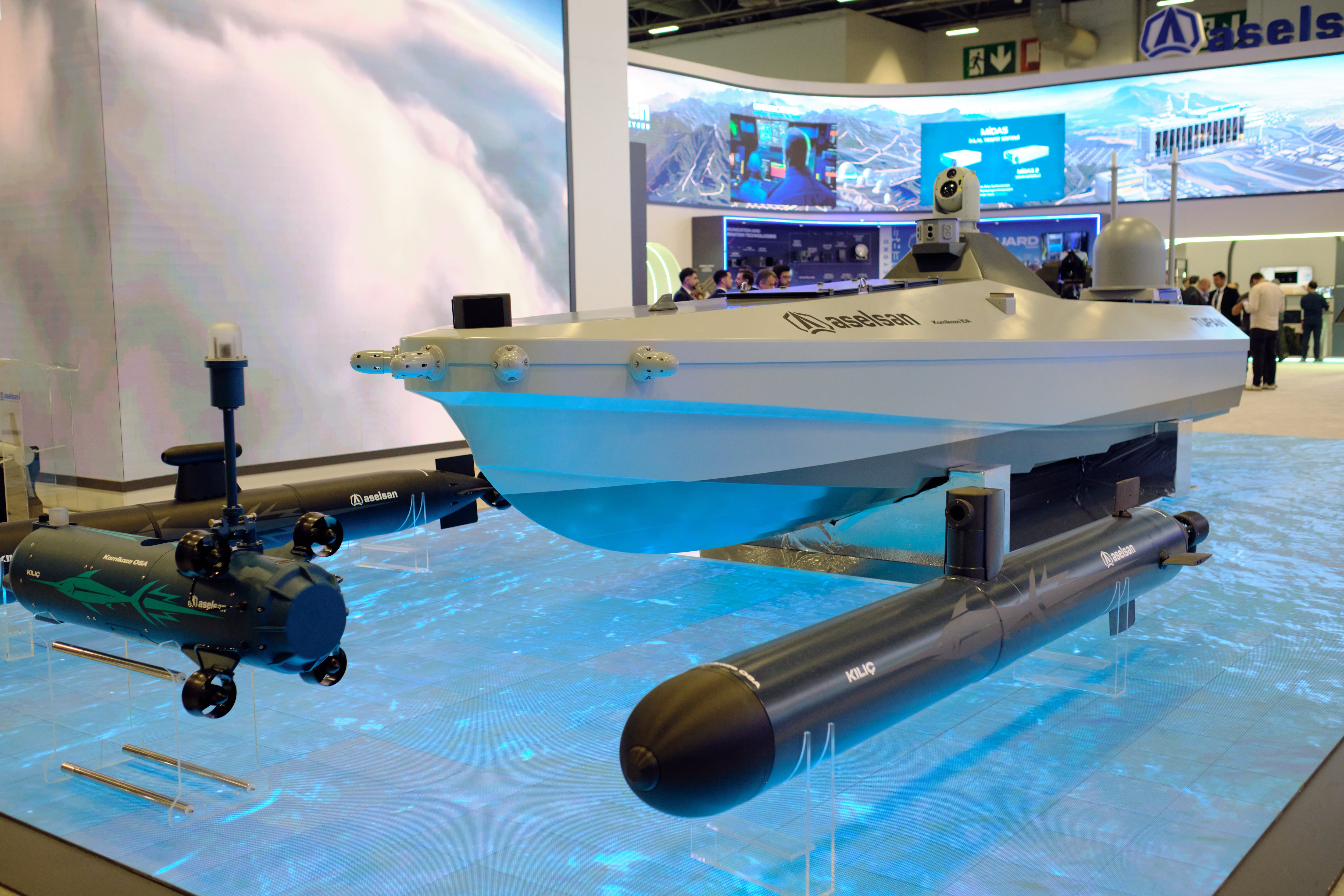

Following ingestion, a database needs to be able to process the data for any AI/ML engine. Data processing typically occurs remotely, often far from where decision-making occurs. High-speed processing occurs from the edge to the tactical cloud — from an unmanned vehicle in one country to the command center in another, for example. During replication between the edge and tactical cloud, there needs to be virtually zero delay, whether processing gigabytes or petabytes of data.

The data needs to move from the data-gathering point to the decision point as quickly as possible with the lowest latency because decision-makers can’t wait. Tactical processing can occur anywhere from a Humvee to a ship, whether or not there’s a network connection. Ideally, database requests should be sent directly to the node holding the data without any intermediate processing or obstacles to overcome. Rounding out the process, the database needs to compress the data back to the core.

Cross datacenter replication

Cross datacenter replication (XDR) is an active-active feature that replicates data asynchronously — the master node commits to the “write” transaction right away and then updates the replica node/s via XDR as fast as is realistically possible. It is a highly in-demand capability, providing dynamic, fine-grained control for data replication across geographically separate clusters.

Such capability becomes vital in defense scenarios where command centers operate at great distances from the field. XDR facilitates the rapid, low-latency transfer of data between data centers, ensuring that relevant information is accessible across regions while adhering to data locality regulations.

Always on

C4ISR efforts run around the clock, and so should the infrastructure. Ensure that your database offers five-nines uptime so that it’s fully operational 99.999% of the time with less than six minutes of downtime per year. If a database experiences regular outages for hours at a time, that not only costs time but can cost personnel or compromise missions.

While initial software license costs may seem straightforward, agencies should also be aware of other expenses that can quickly escalate as data grows. Different databases require different server, storage, and networking requirements, so it’s important to determine the costs of these components. Additionally, many database vendors use node-based pricing, causing software license fees to increase with hardware costs.

Through this model, a vendor may require an unnecessary amount of hardware — in the case of databases, a superfluous amount of low-density servers based on inefficient in-memory architectures. These in-memory strategies also necessitate more data copies (i.e., increased replication factors), leading to even greater infrastructure demands. Be sure that your database prices by the data volumes under management to mitigate this problem.

Additionally, setting up, managing, upgrading, and troubleshooting databases requires a team of skilled professionals, which can strain personnel. Therefore, it is important to consider the personnel available to manage infrastructure. While some databases might be manageable initially, they can become complex and resource-intensive as the deployment grows.

To avoid this, agencies should evaluate resources to ensure that database maintenance and support costs are manageable while verifying that team members spend less time on routine database tasks when they could direct their skills and efforts toward more critical initiatives.

In an era in which data is omnipresent and accessible around the clock, the success of military operations hinges on leveraging the right technology to handle and deploy data efficiently in defense of our nation’s security.

Cuong Nguyen is vice president, public sector, at Aerospike, a provider of a real-time, high performance NoSQL database designed for applications that cannot experience any downtime and require high read & write throughput.