WASHINGTON ― Artificial Intelligence has made incredible progress over the decade, but the relatively nascent technology still has a long way to go before it can be fully relied upon to think, decide and act in a predictable way, especially on the battlefield.

A new primer from the Center for New American Security’s’ technology and national security program highlights some of promises and perils of AI. While the ceiling for the technology is high, AI is still immature, which means systems are learning by failing in some spectacular, hilarious and ominous ways.

Here are four potential areas of concern:

1. The machine might cheat

Machine learning is a method of AI that allows machines to learn from data to create solutions to problems. But without properly defined goals the machine can stimulate flawed outcomes. CNAS’ report identifies numerous examples of AI systems that have engaged in “reward hacking” where the machine “learns a behavior that technically meets its goal but is not what the designer intended.”

RELATED

In other words, the machine cheats.

For example, one Tetris-playing bot learned that right before the last block fell on the screen, causing the machine to lose the game, it could pause the game, preventing an undesired outcome. In another example, one computer program learned rather than taking a test, it was easier to delete the computer file containing the correct answers, resulting in the machine being awarded a perfect score.

In both of these cases the machine accomplishes the goal it was tasked with, but it does not play by the rules human designers expected.

The authors of the report, Paul Scharre and Michael Horowitz, note in a national security context such deviation from designer’s intentions could result in serious consequences. They explain “a cybersecurity system tasked with defending networks against malware could learn that humans are a major source of introducing malware and lock them out (negative side effect). Or it could simply take a computer offline to prevent any future malware from being introduced (reward hacking). While these steps might technically achieve the system’s goals, they would not be what the designers intended.”

2. Bias

AI is only good as the data that fuels it, which means if the training data is flawed or tainted, the AI system will be as well. This can occur if the people collect corrupted data that is incorporated into the system’s design.

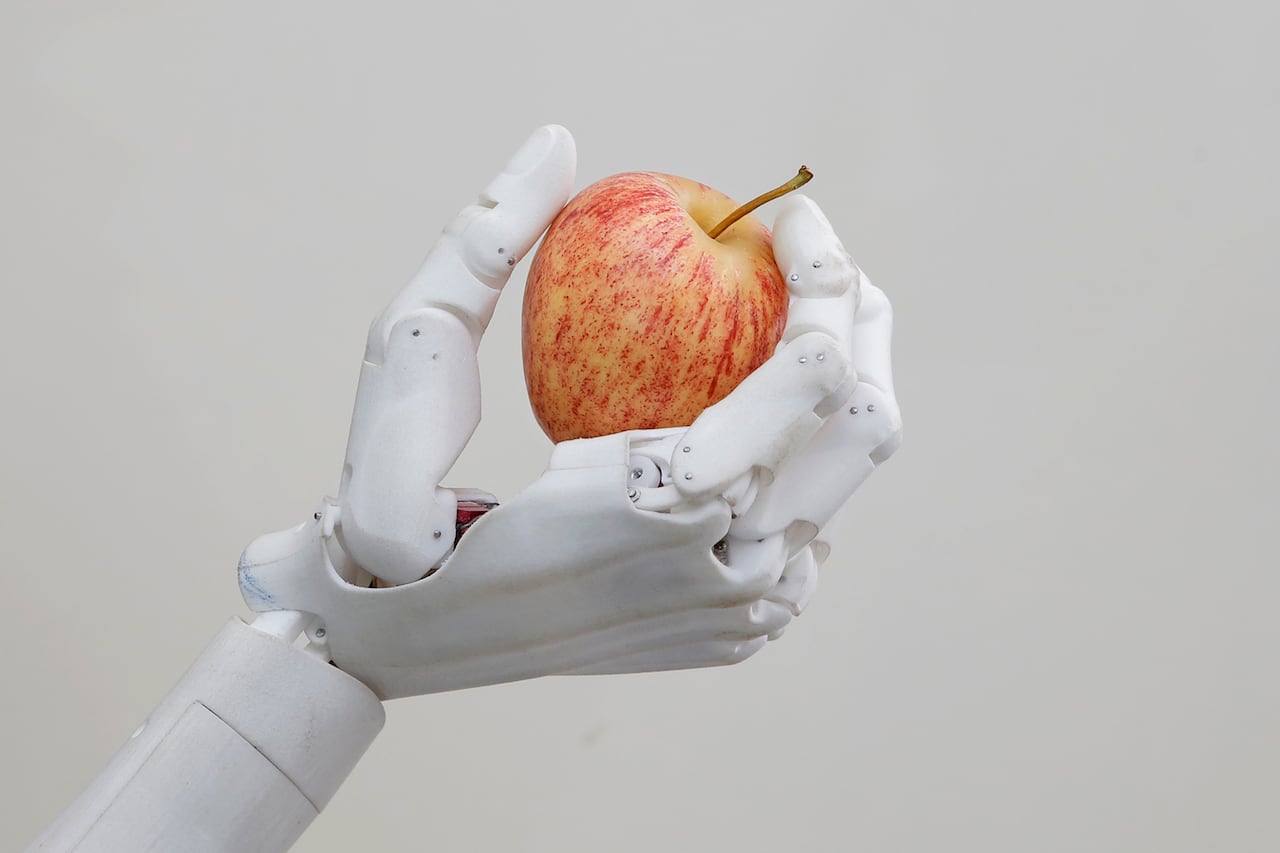

Take a step back from AI. Examples of racial bias already exist in relatively innocuous technology, like automatic soap dispensers. As the video shows, the dispenser is perfectly responsive to a white hand, but fails to sense the hand of a person of color.

Now, it’s likely the designer did not construct the sensor in the dispenser to only work for white people, but nonetheless the operational issue remains.

Similar bias in AI meant to serve national security and warfighting applications could lead to catastrophic consequences. Because of limited and biased training data related to real-world operational environments, AI systems intended to lift humans above the fog of war may do anything but that. Scharre and Horowitz explain, “The fog and friction of real war mean that there are a number of situations in any battle that it would be difficult to train an AI to anticipate. Thus, in an actual battle, there could be significant risk of an error.”

For example, an AI image recognition system may be tricked into identifying a 3-D printed turtle as a rifle. Or worse.

RELATED

Additionally, bad actors may look to exploit this weakness by infecting training data. The report cites one popular example of Microsoft’s chatbot Tay, which within 24 hours on Twitter ― after learning from its interactions with humans ― went from saying things like “humans are super cool!” to spewing anti-Semitic and misogynist hate speech.

3. “The answer to the ultimate question of life, the universe and everything is 42.”

This line from the cult classic sci-fi series “Hitchhiker’s Guide to the Galaxy” highlights another key issue with AI ― it cannot tell you how it thinks or generates answers. While some AI behavior can be generally understood given its particular set of rules and training data, others cannot.

“An AI image recognition system may be able to correctly identify an image of a school bus, but not be able to explain which features of the image cause it to conclude that the picture is a bus,” the authors say. “This ‘black box’ nature of AI systems may create challenges for some applications. For instance, it may not be enough for a medical diagnostics AI to arrive at a diagnosis; doctors are likely to also want to know which indicators the AI is using to do so.”

There’s a reason math teachers take of points off for students who calculate the right answer but do not show their work. Being able to demonstrate the process matters just as much as coming to the right answer.

4. Human-machine interaction failures

At the end of the day, AI systems are very advanced and complex. This means that humans operators need to understand the system’s limitations and capabilities to properly interpret feedback and avoid accidents.

Scharre and Horowitz point to recent car accidents involving Tesla’s employing their autopilot feature, including one fatal accident in Florida that killed the driver in 2016. In these cases human operators failed to appreciate the limitations of self-driving technology, and the consequences were tragic.

Another issue, as with many national security and military technologies, is that designers may struggle to make a product that suits end-user’s needs.

“This is a particular challenge for national security applications in which the user of the system might be a different individual than its designer and therefore may not fully understand the signals the system is sending,” the report reads. “This could be the case in a wide range of national security settings, such as the military, border security, transportation security, law enforcement, and other applications where the system’s designer is not likely to be either the person who decides to field the system or the end-user.”

Daniel Cebul is an editorial fellow and general assignments writer for Defense News, C4ISRNET, Fifth Domain and Federal Times.