In 2016, the National Geospatial-Intelligence Agency’s then-Director Robert Cardillo announced the creation of NGA Research, a directorate formerly known as InnoVision. This new team was set to focus on radar, automation, geophysics, geospatial cyber and anticipatory analytics: all emerging technologies that would help the intelligence agency do its job better now and in the future.

In June 2018, Cindy Daniell took the helm and was a natural fit. Daniell, a former program manager at the Defense Advanced Research Projects Agency, has expertise in imaging sensors, signal processing and compression, sensors and solar energy, as well as surveillance technologies for geo-referenced intelligence.

Daniell is also taking the lead on much of NGA’s artificial intelligence work. She spoke with C4ISRNET editor Mike Gruss about automation and how AI can help analysts find something significant to report when in the past there may have been nothing.

C4ISRNET: How does artificial intelligence help NGA now and into the future? The example we hear so often is either image recognition or image processing. “We’ve seen 1 million pictures of dogs; we know this is a dog. We’ve seen 1,000 pictures of barns; we know this is a barn.”

CINDY DANIELL: It helps us in what we call video triage, image triage. One of the key problems and challenges that our analysts have is what we call “nothing significant to report,” or just NSTR. Analysts might be on the floor and constantly just saying, “NSTR, NSTR, NSTR” … hitting the click button to go through those images until they see one that might have something of value. We are looking to AI to filter that, to perform video triage for us and tell us where there’s something significant and meanwhile they can just cull out all of the NSTRs. What happens now is that we are just holding all of this data and some of it doesn’t get looked at.

We’re looking to AI to perform those mundane processes and to help us allow the analysts then to focus on the real problems of complexity. The problems that take spatial analysis, that take correlating patterns of activity together and take more of a strategic reasoning; the AI can process out all of these simple robotics.

C4ISRNET: What’s an example of that?

DANIELL: In Puerto Rico, when all the damage was done with [Hurricane Maria], we had to go through and look very carefully at things like dams that might have infrastructure issues, or power plants. That took analysts time. With AI, what we’re looking to is really automate simple processes like change detection. With a more intelligent change detection we could look at the before and after from the hurricane and be able to determine the infrastructure failure, sensitivities. It’s sensitivities in the infrastructures that have occurred that then cue an analyst.

Some infrastructure might have a subtle shift to it. That would be something that’s done by hand now. We would look to AI to automate and be able to find it.

C4ISRNET: But NGA also has unique challenges because of its mission.

DANIELL: AI is very useful to us because our problem is much different than the problem in the rest of the world. It’s very easy if you’re going to try to find a cat because there are thousands of images of cats out there. AI takes a lot of training data, so there’s a lot of that information out there and, voila, they can find the cats.

But with us there may be very few examples of this particular feature that works, or that exists in the real world, and even when it does exist it’s hidden behind a branch, it’s in a lot of clutter, it’s at an obscure angle. Most of our resources are satellites. We’re taking a very far remote image of this. We don’t have the luxury of having very clean shots of cats necessarily staring right into the camera, instead of behind a tree. We don’t have the luxury of clean data; we don’t have the luxury of the camera angle, the sensor angle that we want; we don’t have the luxury of very much data.

Think of a Tesla Roadster. They only made 2,000 of them, sold in over 30 countries. They are quite distinct, but imagine trying to find them in the over 1 billion cars in the world.

C4ISRNET: Have there been successes?

DANIELL: We’ve had great success. For instance, we were looking for a particular feature and only had a couple dozen examples. This is compared to ImageNet, which has thousands of examples of the things they’re looking for. We were able to, with great success, find these exemplars with just a couple dozen examples using a technique called low-shot learning. That’s where AI was a huge help in what we do. It’s a needle in a hay stack.

C4ISRNET: How do you expect government AI contracts to play out for industry?

DANIELL: We follow the DARPA model; I come from DARPA, and part of that model is to engage in seedlings ... the single most critical question that you would ask to buy down the risk for your particular problems you’re trying to solve. The sooner we get through that question, the sooner we can open the larger program in that area. We’re very interested in engaging with the public via email at NGA_Research@nga.mil. We want people to come and visit us; we’d like them to bring their ideas to us and to help us ... tell us what problems, what they see as the future, what problems they would like to solve and bring their ideas to us because we’re very interested in hearing that.

C4ISRNET: What are some of the primary problems you’ve talked to your team, or industry, about solving?

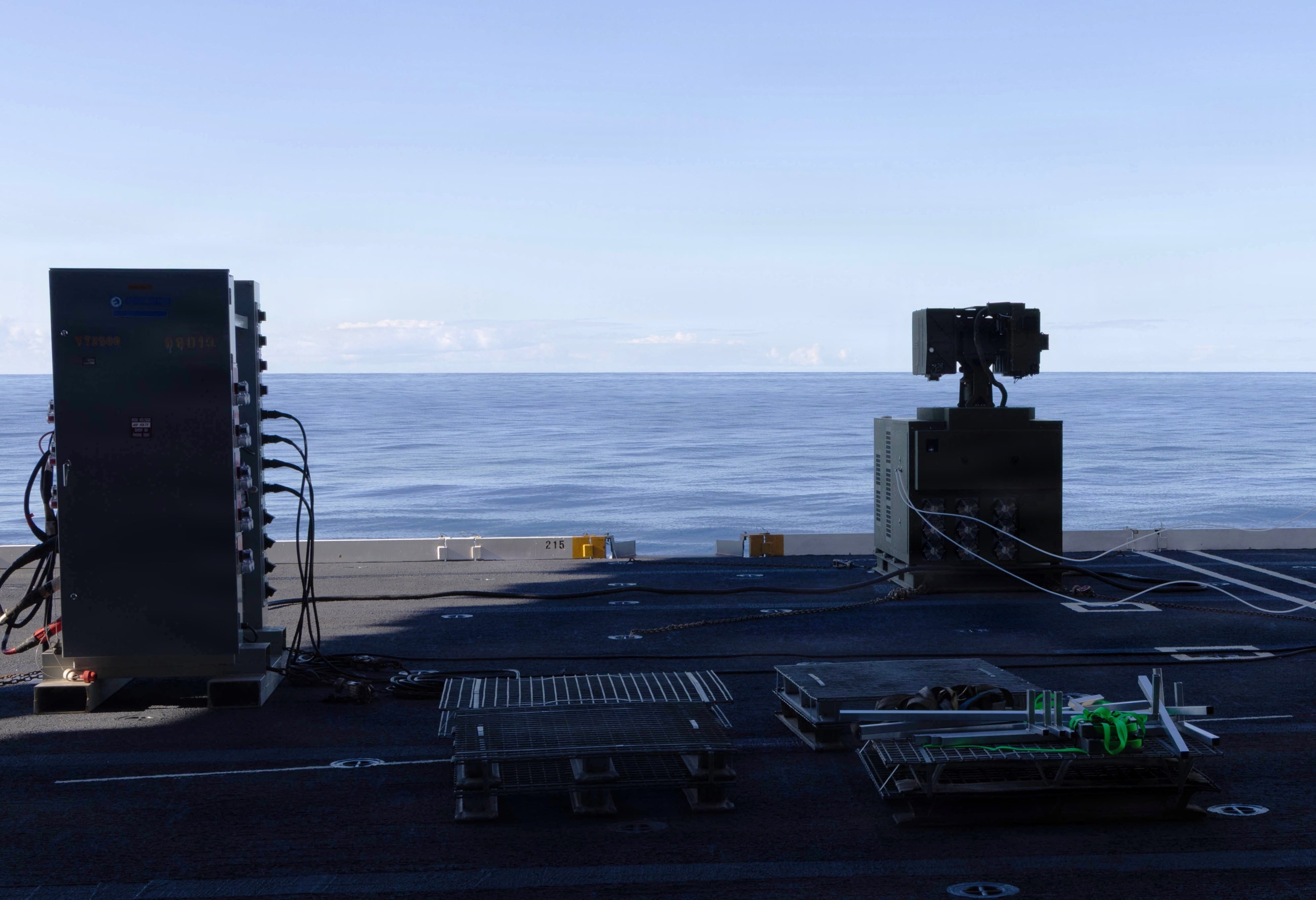

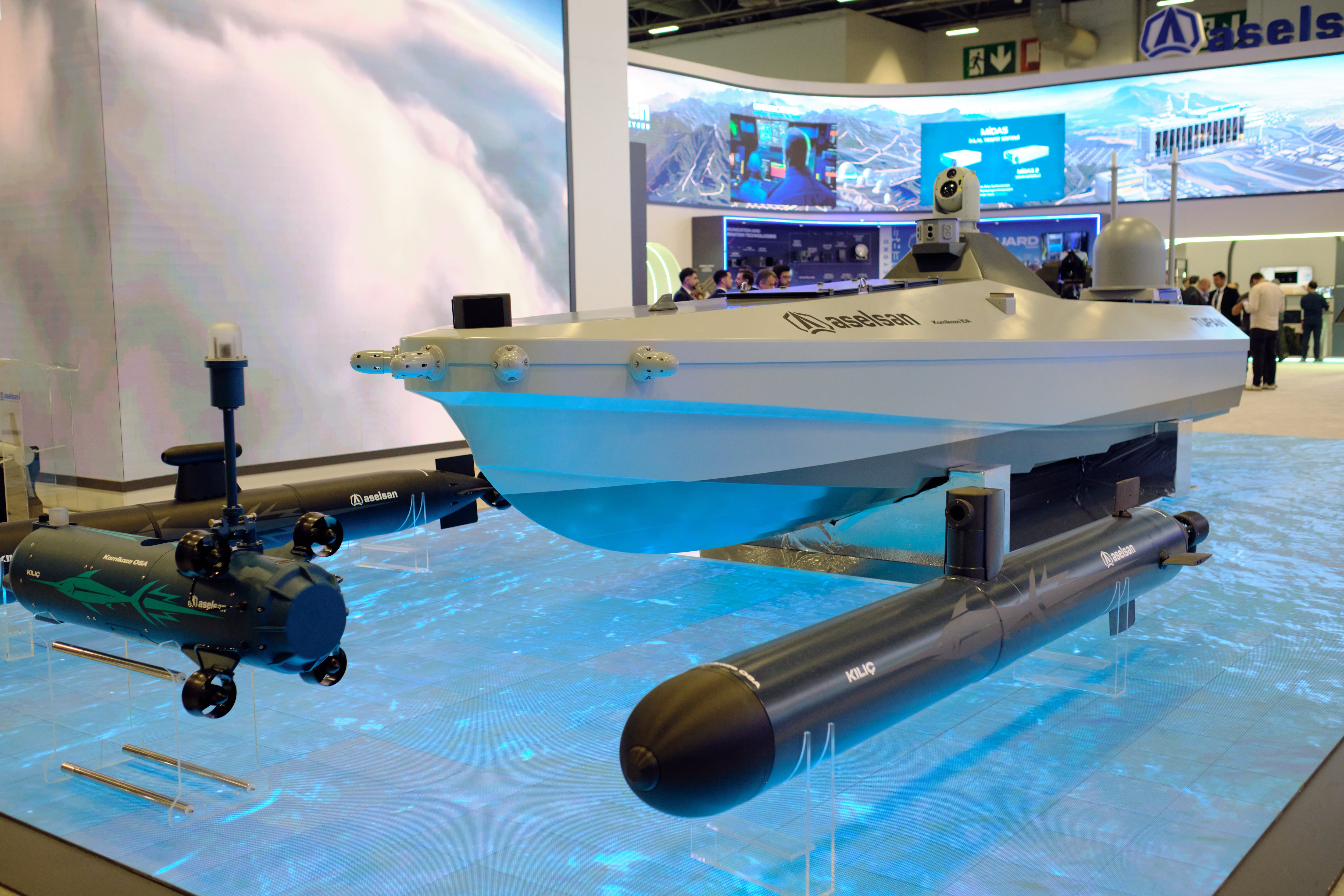

DANIELL: One thing we’re very interested in is the plethora of sources that exist out there. We’ve got all of the [national technical means], the national assets. In addition, we have the huge plethora of commercial space industry that’s out there now, ground sensors … we take in all sorts of data. UAVs that might be flying, we take in their data as well.

One area we’re really interested in is source strategies. Strategies for using ... for optimizing the use of these sources. The right source at the right time for your particular objective. How do you know if you should be using Satellite A versus Satellite B? All the technical details about the sensors, depending on the weather, the humidity, the cloud cover, can help determine whether one sensor might work better than another. We would be very interested in industry ideas on how they dissect that challenge.

C4ISRNET: When you don’t have the clean data maybe AI could help you determine whether it’s best to use Satellite A vs. Satellite B.

DANIELL: That’s right. It depends on many variables, such as the weather. Satellite A might work differently in cases of higher humidity, but Satellite B might work better in lower humidity. Also, it depends on your objective. Satellite A might work better when you’re looking at a ground target because of the shine coming off the Earth, but Satellite B might work better looking over a lake because you’ve got different reflectivity depending on which terrain you’re over.

AI would work well at dissecting all of that. Also, we’re very interested in the new radar sensors that are in low-Earth orbit. I think they’re not exploited to the capacity that they could be. I think that there’s a lot more room to work with new radar sensors in LEO. Particularly, in having them work together, and constellations of these radar satellites, or these radar sensors ... these clusters. How can they work together to really improve the exploitation of the radar data?

Also, we’re looking in the next layer, the next step in image understanding beyond automatic target recognition.

Right now, everybody’s looking at ATR as the ... you know, looking for the particular thing in the image, but how does that thing in the image relate to everything else around it?

Image understanding, situational awareness beyond ATR, and then finally the last thing I’ll say is automated feature extraction. That goes back to the example I gave you about Puerto Rico. Automated feature extraction, the change in the dam, the change in the mountain range, did it move? Has the fault line moved? All of these things about the Earth, but automated. That’s something that interests us.

Mike Gruss served as the editor-in-chief of Sightline Media Group's stable of news outlets, which includes Army Times, Air Force Times, C4ISRNET, Defense News, Federal Times, Marine Corps, Military Times and Navy Times.