For Senator Martin Heinrich, falling behind on the development of artificial intelligence carries with it ethical hazard. The Land of Enchantment’s junior senator announced a new five-year initiative to expand AI May 21, creatively dubbed the Artificial Intelligence Initiative Act (AI-IA).

Heinrich, D-N.M., wants the bill — cosponsored by Rob Portman, R-Ohio, and Brian Schatz, D-Hawaii — to fund better, more useful and, most importantly, more ethical developments in artificial intelligence throughout government and industry. The danger, central to the AI-IA pitch, is that without a concerted American effort, the future of AI will be decided by forces outside the influence of the United States.

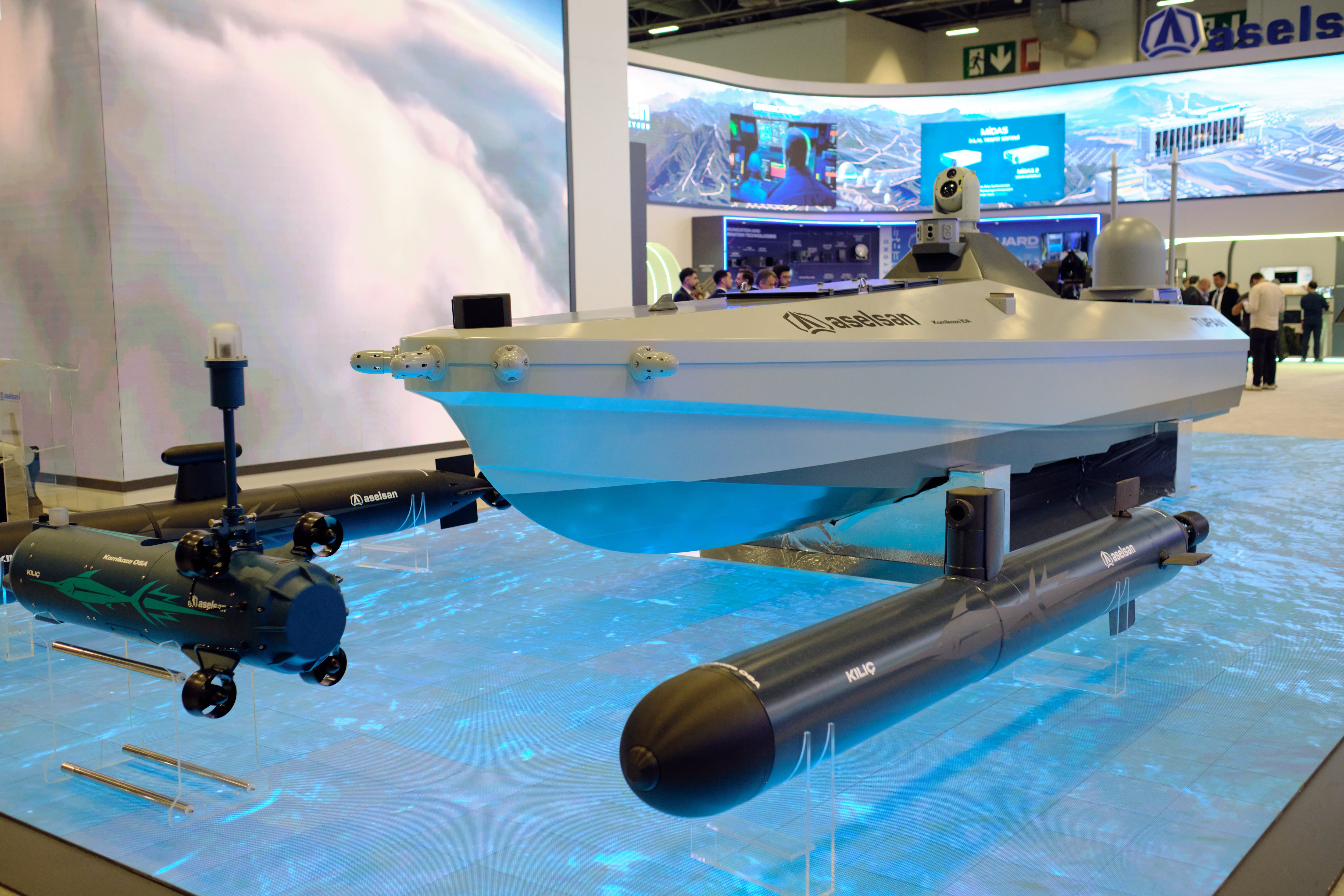

“China's emergence in the AI space poses a great danger to an ethical global adoption of standards and uses of these technologies,” said Heinrich. “They are not a free market economy that respect civil liberties and privacy the way that our country does. We can already see the danger posed by this in the Chinese use of AI and mass surveillance technologies in their oppression of minority Uighurs in western China. We simply cannot allow this type of unethical use of AI technology to proliferate around the globe or to certainly take root here. That's what'll happen unless we step up our efforts here domestically.”

The senator admitted that the United States is unlikely to ever match China in terms of dollar amounts spent on AI, but instead will be able to out-innovate in the AI space by using what resources it does allocate smarter, and more inclusively. Part of this means constructing an AI development pipeline at education institutions, including at least one K-12 school and at least one minority-serving institution. The text of the act notes that it’s important to collaborate “with minority-serving institutions in order to facilitate the sharing of best practices and approaches for increasing and retaining underrepresented populations in the artificial intelligence field.”

Not explicitly stated is the possibility that working with underrepresented populations is a first step to making sure that AI tools developed with federal funding at least have one check in place to mitigate harms those tools could cause to those populations. Existing practices in both harvesting data for AI and implemented that AI tend to reinforce existing power structures and replicate existing errors. Adding more people sensitive to these concerns into the pipeline is likely necessary but insufficient to address critics durable complaints about AI development and use.

Set to designate $2.2 billion to spend on AI over the next decade, the act stands to shape the AI field in a major way should it pass. Part of that will be through active effort, by establishing a National AI Coordination office and related committees to develop a strategic plan for AI research. Some of that will be through the National Institute of Standards and Technologies, by requiring it to create standards for evaluating AI algorithms and assess the quality of training data sets. The National Science Foundation will create educational goals and fund research through educational institutions, shaping the pipeline of AI workers for years to come. And the act will fund an AI research program in the Department of Energy, making up to five new AI research centers available primarily to government and academic researchers but open to private sector use.

Altogether, the act is about building the physical, human and financial infrastructure to sustain guide, and coordinate AI research, with the assumption that shared standards and the country housing the work will spill over into ethical use.

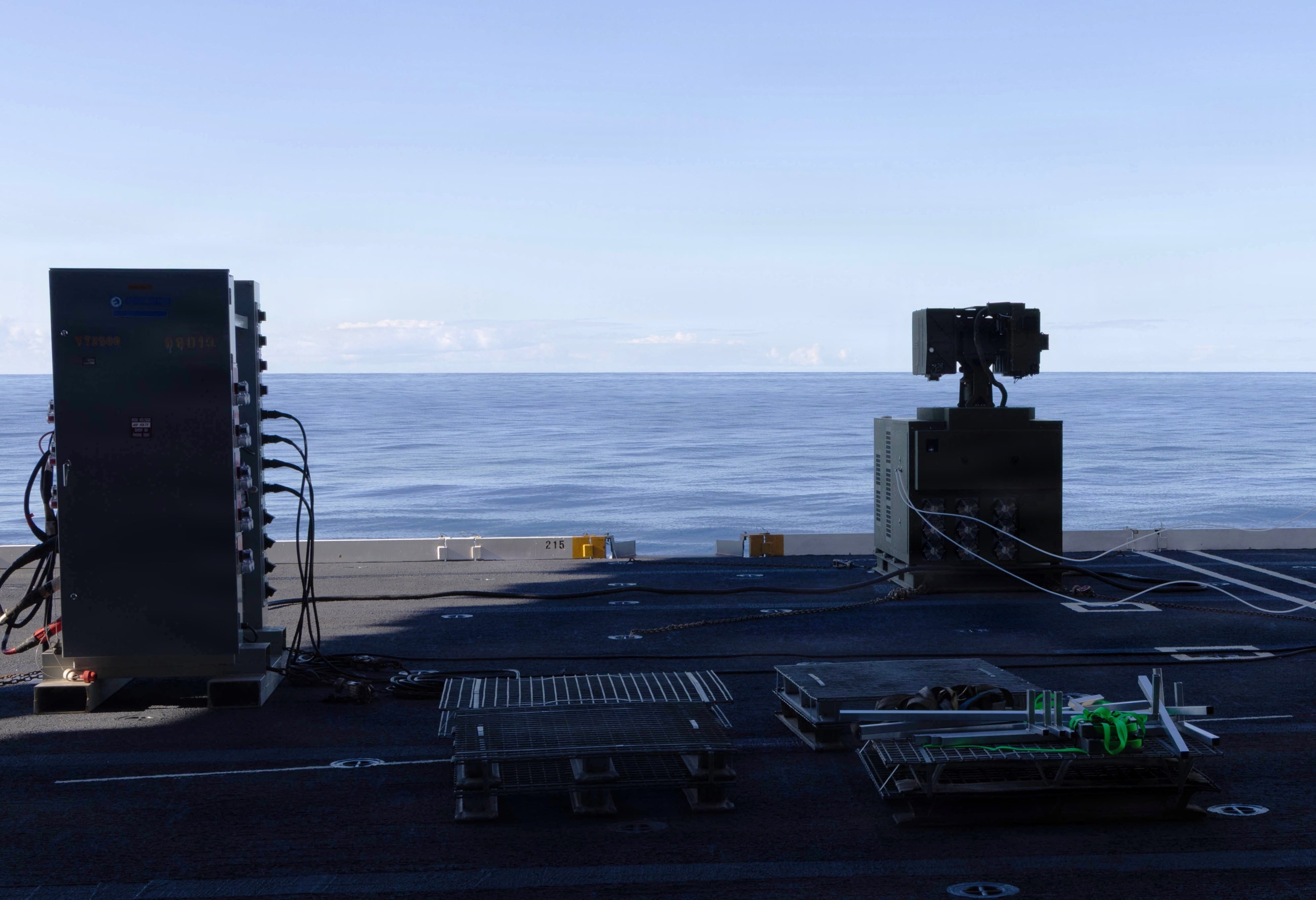

It’s worth noting that the AI-IA is distinct from Heinrich and Portman’s “Armed Forces Digital Advantage Act,” which seeks to improve the AI workforce within the Pentagon. AI is a field-agnostic technology, so efforts to fund research in the civilian world will likely have a spillover effect to military users, especially if the military has the skilled personnel on hand to take advantage of the new technology.

Kelsey Atherton blogs about military technology for C4ISRNET, Fifth Domain, Defense News, and Military Times. He previously wrote for Popular Science, and also created, solicited, and edited content for a group blog on political science fiction and international security.